Artificial Intelligence (AI) has become a buzzword across various industries, promising to revolutionize how we live, work, and communicate.

AI has rapidly evolved in recent years, fueling technological breakthroughs and unlocking new possibilities, from self-driving cars to intelligent virtual assistants.

This comprehensive guide on Artificial Intelligence (AI) will provide an overview of what AI is, its potential, and its impact on industries and everyday life.

The guide will also delve into the terminology, technologies, and applications associated with AI and the ethical considerations surrounding its use.

By the end of this guide, readers will better understand AI and its growing significance in today’s world.

Key Takeaways:

- AI Development and Types: AI has evolved significantly since its conception, progressing from simple algorithms to complex systems capable of learning and making decisions. It is categorized into narrow AI (weak AI), general AI, and artificial superintelligence, with narrow AI being the most commonly implemented form today, designed for specific tasks.

- Machine Learning and Algorithms: At the heart of AI are algorithms and machine learning processes, including supervised learning, unsupervised learning, and reinforcement learning. Each plays a critical role in how AI systems learn from data, recognize patterns, and improve their decision-making capabilities over time.

- Ethical Considerations: The deployment of AI raises important ethical questions, particularly concerning fairness, privacy, job displacement, and the broader impact on society. Ensuring AI systems are unbiased and make decisions that reflect ethical considerations is crucial.

- AI in Industries: AI has transformative potential across various sectors, including healthcare, finance, transportation, and more, offering innovations that can enhance efficiency, accuracy, and outcomes

- Hardware and Software for AI: The development and deployment of AI rely on both software and specialized hardware, such as CPUs, GPUs, and TPUs. These technologies are essential for running complex AI models and applications efficiently.

- Natural Language Processing (NLP): NLP is a significant area of AI that facilitates machines’ understanding, interaction, and generation of human language. It plays a crucial role in developing applications like chatbots and translation services.

- Data’s Role: Data is foundational to AI’s success, serving as the critical input for training AI models. However, the quality and quantity of data significantly impact the performance and efficacy of AI systems.

What is Artificial Intelligence?

Artificial Intelligence (AI) is the branch of computer science that focuses on developing intelligent machines that can perform tasks that typically require human intelligence.

AI systems are programmed to learn from data, recognize patterns, and make decisions based on input. AI has become increasingly influential across the healthcare, finance, and transportation industries in recent years.

The concept of AI has existed for centuries, with early examples appearing in Greek mythology and medieval European folklore. However, it wasn’t until the 1950s that researchers began to develop the first AI algorithms and systems.

Today, AI is categorized into different types: narrow AI, general AI, and artificial superintelligence.

Understanding Narrow AI, General AI, and Artificial Superintelligence

Narrow AI, also known as weak AI, is designed to perform specific tasks within a limited range of functions. Examples of narrow AI include image or speech recognition software.

General AI, also known as strong AI, is designed to perform tasks that require human-like intelligence, such as reasoning, problem-solving, and decision-making. General AI does not yet exist, but it is the ultimate goal of AI research.

Artificial Superintelligence goes beyond human intelligence and can outperform even the most intelligent humans in every aspect. This level of AI does not yet exist but is a topic of debate among researchers and futurists.

As AI continues to evolve, it can potentially change the world as we know it. The next section will explore the key terminology and concepts in AI.

Basic Terminology in AI

Before diving into the world of Artificial Intelligence (AI), it is essential to understand some basic terminologies.

As AI involves machine learning and neural networks, let us explore the meaning and significance of these terms.

Machine learning refers to the ability of machines to learn from data, improving their performance on a specific task over time. This learning process involves algorithms enabling machines to identify patterns and predict based on previous data.

Neural networks are a machine learning architecture that models how the brain works by creating a network of interconnected nodes that process information in layers. Neural networks are used in many AI applications, including image and speech recognition.

Data science is an interdisciplinary field that involves data extraction, analysis, and management. In AI, data science is crucial in providing the necessary information for machines to learn and make decisions.

“AI learns from data. The more data it has, the better it gets.”

How Does AI Work?

For AI to function, it requires a combination of basic algorithms, training, and data sets.

Algorithms are the rules and instructions given to the AI system to follow. These algorithms process data and make decisions based on that data.

Training is feeding data into an AI system to teach it how to recognize patterns and make predictions based on the data it has been given. This process makes the AI system more accurate and efficient over time.

Data sets serve as the foundation of AI development. They provide the necessary information for the system to learn and make decisions.

When developing an AI system, it is essential to have a large, diverse, and accurate data set that properly reflects the real-world scenarios the system will encounter.

The training process involves splitting the data into two sets: the training set and the testing set. The training set is used to teach the AI system, while the testing set is used to evaluate the system’s accuracy and performance.

Once an AI system is fully trained, it can be used for various tasks, such as image recognition, language processing, and decision-making.

Applications of AI

Artificial Intelligence (AI) has revolutionized various industries, including healthcare, business, and transportation.

| Industry | AI Application |

|---|---|

| Healthcare | Medical diagnosis and treatment planning with AI-powered decision support systems. Drug discovery and development with predictive analytics and machine learning. Patient monitoring and virtual assistance with remote sensing and natural language processing. |

| Business | Customer service and engagement with chatbots and personal assistants. Marketing and advertising with predictive analytics and recommendation systems. Financial analysis and forecasting with machine learning and predictive modeling. |

| Transportation | Route optimization and traffic management with predictive analytics and machine learning. Autonomous vehicles and drones with computer vision and natural language processing. Supply chain optimization and logistics with predictive modeling and optimization algorithms. |

AI has created new products and services, improved operational efficiency, and enhanced customer experiences. We can expect to see even more innovative applications with further advancements in AI technology.

Ethics and AI

As AI becomes more prevalent in society and industries, it is crucial to consider the ethical implications of its development and use.

One of the key concerns is data privacy.

With the amount of data being collected and analyzed by AI systems, there is a risk of sharing or using sensitive information without consent. It is important for AI developers and users to prioritize protecting personal data and being transparent about how it is being used.

Another ethical consideration is decision-making algorithms.

AI systems are often used to make decisions that affect people’s lives, such as in the criminal justice system or hiring practices. It is important to ensure these algorithms are fair and unbiased, not perpetuating systemic inequalities.

Additionally, there are broader ethical implications to consider, such as the potential for job loss or the impact of AI on society.

It is important for individuals and organizations to critically evaluate the impact of AI and make ethical decisions regarding its use.

In short, ethical considerations must be at the forefront of AI development and deployment. Data privacy, decision-making, and broader societal implications all play a role in ensuring the responsible use of AI.

AI vs. Machine Learning vs. Deep Learning

While the terms Artificial Intelligence (AI), Machine Learning (ML), and Deep Learning (DL) are often used interchangeably, they actually represent distinct concepts and technologies. Understanding their differences and relationships is essential for anyone interested in AI development.

AI

AI is the broadest of the three terms, encompassing any technology that can perform tasks that typically require human intelligence.

This includes everything from image and speech recognition to natural language processing and decision-making.

Machine Learning

Machine Learning is a subset of AI that involves training algorithms to recognize patterns in data and make predictions or decisions based on that analysis.

Unlike traditional programming, in which a programmer writes specific instructions for a computer to follow, Machine Learning allows algorithms to learn from data and improve their performance over time without being explicitly programmed.

Deep Learning

Deep Learning is a subset of Machine Learning that involves training neural networks, which are algorithms designed to simulate the function of the human brain.

Deep Learning enables AI models to recognize complex patterns in large datasets, making it ideal for image recognition and natural language processing applications.

While AI, Machine Learning, and Deep Learning are distinct concepts, they are often used together to create powerful and sophisticated AI applications that can perform tasks that would have been impossible just a few years ago.

Getting Started with AI

If you are interested in artificial intelligence (AI) and want to learn more, you must possess essential skills.

From programming to data analysis, these skills will help you master AI development and prepare you for the industry.

One of the critical programming languages for AI is Python, a versatile language that is beginner-friendly.

Python is a popular language in the AI development community because of its simplicity and readability. Its syntax and libraries make learning and developing machine learning algorithms easy, and it has a large online community that provides great resources for beginners.

The language has libraries facilitating data manipulation, preprocessing, and visualization, making it ideal for AI development.

As a beginner starting with AI, it is essential to take on practical projects.

These projects will help you apply what you’ve learned and give you a better understanding of AI technology. Some beginner projects you can embark on include image classification, sentiment analysis, and chatbot development.

In conclusion, mastering programming skills such as Python is vital for getting started with AI. Once you have the programming language, you can start working on AI projects to gain practical experience in the field.

Machine Learning Algorithms

Machine learning algorithms are the backbone of artificial intelligence.

They enable machines to learn from data and improve without being explicitly programmed.

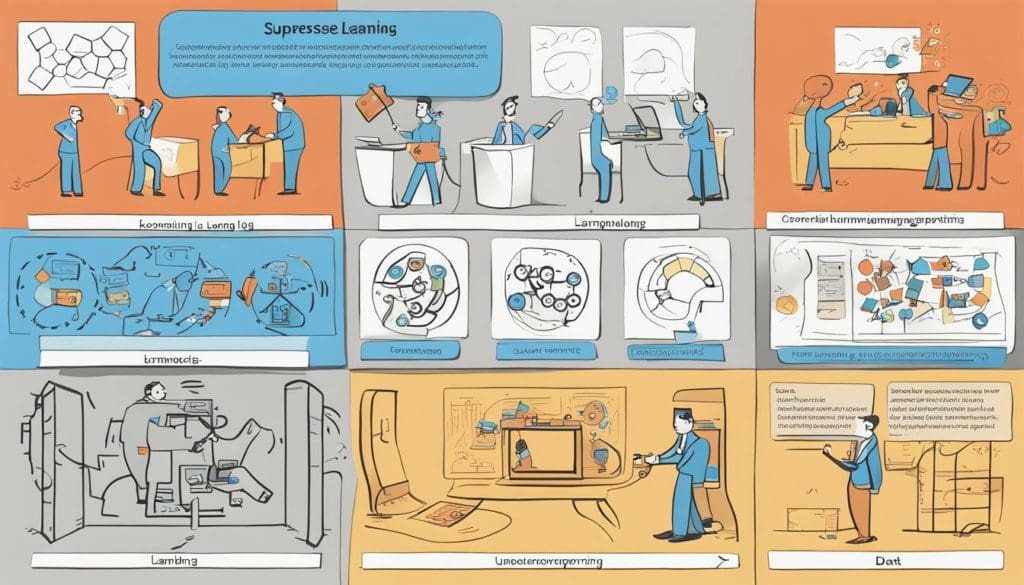

Three main machine learning algorithm types are supervised, unsupervised, and reinforcement.

Supervised Learning

Supervised learning is a type of machine learning where the model is trained on labeled data.

This means that the data has already been classified or labeled, and the model learns to make predictions based on this data. For example, a supervised learning algorithm can be trained to recognize handwritten digits.

The model is fed images of digits and the corresponding label (the number the digit represents). The algorithm then learns to recognize and correctly classify the image patterns.

Supervised learning is used in various fields, including image recognition, speech recognition, and natural language processing. It is also used in data mining and predictive analytics.

Unsupervised Learning

Unsupervised learning is a type of machine learning where the model is trained on unlabeled data.

This means that the data has not been classified or labeled beforehand.

The model learns to find patterns in the data and group similar data points together. For example, an unsupervised learning algorithm can cluster customer data based on purchasing behavior.

The algorithm would group customers with similar buying patterns, even if their purchases do not fall under the same category.

Unsupervised learning is used in clustering, anomaly detection, and dimensionality reduction. It can also be used for market segmentation and image segmentation.

Reinforcement Learning

Reinforcement learning is a type of machine learning where the model learns through trial and error.

It is based on the concept of rewards and punishments. The model takes actions in an environment and receives rewards or punishments based on its actions. The goal of the model is to maximize its reward over time.

For example, a reinforcement learning algorithm can teach a robot to navigate a maze. The robot would take actions and receive rewards or punishments based on its actions. The algorithm would learn to navigate the maze by maximizing its reward.

Reinforcement learning is used in robotics, gaming, and recommendation systems. It can also be used in autonomous vehicles and control systems.

Understanding these different machine learning algorithms is essential for developing and deploying effective AI models.

By choosing the right algorithm for the task, developers can ensure that their models are accurate and efficient.

Natural Language Processing (NLP)

Natural Language Processing (NLP) is a field within AI that focuses on enabling machines to understand and interact with human language.

It involves text analysis, translation, and the development of chatbots to facilitate communication between humans and machines.

NLP is becoming increasingly important as more businesses seek to leverage AI’s power to understand better and engage with their customers.

Text analysis is one of the key applications of NLP.

With the help of machine learning algorithms, NLP can identify patterns and insights within large volumes of text data. This can extract valuable information from customer reviews, social media posts, and online articles.

Translation is another application of NLP.

Machine translation has come a long way in recent years, with AI models like Google Translate now able to produce accurate translations in multiple languages.

This has significant implications for businesses looking to engage with customers in different regions of the world.

Chatbots are perhaps the most visible example of NLP in action.

These computer programs use NLP to understand and respond to human language, enabling them to provide customer service, answer questions, and perform other tasks.

Chatbots will likely become even more sophisticated and versatile as AI technology advances.

Challenges in NLP

Despite its potential, NLP faces several challenges.

One of the biggest is the problem of language ambiguity. Human language is incredibly complex and can be interpreted in many different ways. This makes it difficult for machines to understand and respond to human speech accurately.

Another challenge is the need for large amounts of training data.

NLP models require vast amounts of data to be trained effectively. This can pose a problem when working with languages or dialects with limited resources.

Finally, privacy concerns are also a significant issue. NLP models require access to large amounts of personal data to operate effectively.

This raises questions about data privacy and how companies are using this data.

Future of NLP

Despite these challenges, the future of NLP looks bright.

We expect NLP to become even more sophisticated and capable as AI technology advances. This will open up new business opportunities to engage with customers and gain insights from vast text data.

As NLP technology continues to develop, we will likely see it being used in more and more applications.

From customer service bots to translation tools, NLP has the potential to revolutionize the way we interact with technology and with each other.

Computer Vision

Computer Vision is a field of Artificial Intelligence that focuses on enabling computers to interpret and understand the visual world around them.

It involves processing and analyzing images and videos, enabling machines to identify and recognize objects, faces, and patterns.

This section will delve into image recognition, facial recognition, and object detection, showcasing how AI enables these capabilities.

Image recognition is the ability of machines to identify objects, patterns, and attributes within images. This functionality is particularly useful in industries such as manufacturing, where computer vision can be used to inspect products for defects or quality control purposes.

Facial recognition is another common application of computer vision in AI.

By analyzing facial features and patterns, machines can accurately identify and verify individuals, enabling applications such as security access or even unlocking smartphones.

Object detection is the capability of machines to identify and locate objects within an image or video stream.

This application is crucial in areas such as autonomous vehicles, where machines must be able to identify and track objects in real time to avoid collisions.

Data Analytics in AI

Data analytics is a crucial part of the AI development process. Preprocessing and analyzing data is essential for creating accurate and reliable AI models.

Data preprocessing involves cleaning and transforming raw data for machine learning algorithms. This process includes data normalization, feature selection, and data reduction.

Data visualization presents data in visual formats such as charts, graphs, and maps. It helps to identify trends, patterns, and relationships in data, making it easier for stakeholders to understand and make decisions based on the data.

In AI, data analytics is used in various applications such as predictive modeling, anomaly detection, and sentiment analysis.

With the increasing amount of data generated daily, data analytics is becoming an even more critical component of AI development.

“Data is the new oil. It’s valuable, but if unrefined it cannot really be used.” – Clive Humby

AI Hardware and Software

While Artificial Intelligence (AI) is known to be a software technology, hardware plays a significant role in supporting its development and deployment.

Central Processing Units (CPUs), Graphics Processing Units (GPUs), and Tensor Processing Units (TPUs) are some of the hardware components that facilitate AI development.

CPUs are the most common type of processors used for AI development. They have a general-purpose architecture, and their processing power is sufficient for most AI applications.

Conversely, GPUs are specialized hardware designed for parallel computing, making them ideal for running deep learning models.

TPUs are an even more specialized processor designed by Google specifically for AI applications. They are optimized for matrix multiplication and can deliver faster processing speeds than CPUs and GPUs for specific AI tasks.

In addition to hardware, software frameworks are critical for AI development.

TensorFlow is a commonly used open-source software library for building and deploying machine learning and deep learning models. It allows developers to create and train models on various hardware configurations, including CPUs, GPUs, and TPUs.

Other popular software frameworks include PyTorch, Keras, and Caffe.

CPUs, GPUs, and TPUs are hardware components that support AI model development and deployment.

TensorFlow and other software frameworks are critical for creating and training models.

Understanding the role of hardware and software in AI development is essential for building effective AI systems.

Deploying and Optimizing AI Models

Deploying an AI model involves taking the trained model and making it available in a production environment.

This involves various steps, including selecting the right hardware and software resources and optimizing the model for performance.

Optimizing an AI model requires reducing its resource requirements while maintaining or improving its accuracy. This can involve techniques such as quantization, pruning, and compression.

One important consideration when deploying and optimizing AI models is the hardware used to run them.

Central processing units (CPUs) are commonly used for training models, while graphic processing units (GPUs) are better suited for inferencing (running the trained model). TensorFlow Processing Units (TPUs) are specialized hardware designed specifically for AI workloads and can significantly improve performance and reduce costs.

Another crucial factor for deploying and optimizing AI models is the software used.

Popular AI frameworks such as TensorFlow and PyTorch provide tools for training and deploying models. TensorFlow, for instance, offers TensorFlow Serving, a high-performance serving system for Machine Learning models, which supports CPU and GPU serving.

Overall, deploying and optimizing AI models can be a complex process that requires expertise and careful consideration.

However, by selecting the right hardware and software resources and applying optimization techniques, organizations can harness the power of AI to solve real-world problems.

Conclusion

Artificial Intelligence (AI) is a rapidly growing field with the potential to revolutionize industries and daily life.

AI has already made significant contributions to healthcare, transportation, and business, and its impact is only expected to increase.

As this comprehensive guide has shown, understanding AI requires knowledge of its history, basic principles, and terminology, including machine learning, neural networks, and data science.

It’s also important to consider the ethical implications of AI, such as data privacy and decision-making algorithms.

Continued Learning

Beyond this guide, those interested in AI can continue to develop their skills and knowledge by exploring machine learning algorithms like supervised, unsupervised, and reinforcement learning.

They can also dive into fields like Natural Language Processing (NLP) and Computer Vision, which have practical applications in marketing, health, and safety industries.

With the right tools, like the programming language Python and software frameworks like TensorFlow, individuals can begin their own AI projects, addressing problems relevant to their interests and expertise.

By staying up-to-date on AI advancements and considering their impact on society, individuals can contribute to the development and ethical implementation of artificial intelligence.

FAQ

Q: What is Artificial Intelligence?

A: Artificial Intelligence (AI) is the simulation of human-like intelligence in machines programmed to think and learn like humans.

It involves the development of computer systems that can perform tasks without explicit instructions using algorithms and machine learning.

Q: What are the different types of AI?

A: There are different types of AI, including:

- Narrow AI: AI designed to perform a specific task, such as facial or voice recognition.

- General AI: AI that can perform any intellectual task that a human can do.

- Artificial Superintelligence: AI that surpasses human intelligence in virtually every aspect.

Q: What are some key terminologies in AI?

A: Some key terminologies in AI include:

- Machine Learning: A branch of AI that enables machines to learn and make predictions or decisions without being explicitly programmed.

- Neural Networks: A computational model inspired by the human brain that is used to process complex data and recognize patterns.

- Data Science: The study and analysis of large data sets to extract meaningful insights and patterns.

Q: How does AI work?

A: AI works by using algorithms and data to simulate human-like intelligence.

It involves training AI models on large datasets, which allows them to learn patterns and make predictions or decisions. The performance of AI models can be improved through optimization and fine-tuning.

Q: What are some applications of AI?

A: AI has various applications across industries, including:

- Healthcare: AI can be used for disease detection, medical diagnosis, and personalized medicine.

- Business: AI can automate repetitive tasks, improve customer service, and enhance decision-making.

- Transportation: AI can enable autonomous vehicles, optimize traffic flow, and improve logistics.

Q: What are the ethical considerations surrounding AI?

A: Some ethical considerations surrounding AI include:

- Data Privacy: AI systems often rely on large amounts of personal data, raising concerns about privacy and security.

- Decision-Making Algorithms: AI systems can make decisions that impact individuals, which raises questions about accountability and transparency.

- Impact on Jobs: Automating tasks through AI can lead to job displacement and inequality.

Q: What is the difference between AI, machine learning, and deep learning?

A: AI is the broader concept of machines being able to carry out tasks in a way that we consider “intelligent.”

Machine learning is a subset of AI that focuses on algorithms that allow machines to learn from and make predictions or decisions based on data.

Deep learning is a subset of machine learning that utilizes neural networks with multiple layers to perform complex tasks.

Q: How can I get started with AI?

A: To get started with AI, having a strong foundation in programming is helpful, particularly in languages like Python.

You can also explore online courses and resources that offer beginner-friendly projects to practice and apply your skills.

Q: What are some common machine learning algorithms?

A: Some common machine learning algorithms include:

- Supervised Learning: Algorithms that learn from labeled examples to make predictions or decisions.

- Unsupervised Learning: Algorithms that learn from unlabeled data to discover patterns or structures.

- Reinforcement Learning: Algorithms that learn through interactions with an environment to maximize rewards.

Q: What is Natural Language Processing (NLP)?

A: Natural Language Processing (NLP) is a field of AI that focuses on the interaction between computers and human language.

It involves tasks such as text analysis, translation, and developing chatbots that can understand and generate human-like language.

Q: What is Computer Vision?

A: Computer Vision is a field of AI that enables machines to understand and interpret visual data, such as images and videos. It involves tasks such as image recognition, facial recognition, and object detection.

Q: What is the role of data analytics in AI?

A: Data analytics plays a crucial role in AI by providing the foundation for building and training AI models.

It involves tasks such as data preprocessing, which prepares the data for analysis, and data visualization, which helps understand and interpret the data.

Q: What are the hardware and software requirements for AI?

A: The hardware requirements for AI can include CPUs (Central Processing Units), GPUs (Graphics Processing Units), and TPUs (Tensor Processing Units), which are specialized processors for accelerating AI computations.

Software frameworks like TensorFlow are commonly used for developing and deploying AI models.

Q: How can I deploy and optimize AI models?

A: Deploying and optimizing AI models involves taking the trained models from development to production.

This includes considerations such as choosing the right infrastructure, optimizing the performance of the models, and monitoring their performance over time.